Webinar Transcript and Video: Multicloud Over Coffee With Google Cloud

- Cloud networking , Partners

- March 12, 2021

- RSS Feed

Google Cloud, Megaport, Qwinix, and 1623 Farnam recently held a webinar to talk about deploying multicloud to build agile, future-proof networks in a time of increased IT complexity caused by distributed workforces and applications.

Google Cloud, Megaport, Qwinix and 1623 Farnam recently held a webinar and talked about deploying multicloud, specifically using Google Anthos, to build agile, future-proof networks in a time of increased IT complexity caused by distributed workforces and applications.

We’ve provided edited transcript excerpts from the webinar below as well the video where you can watch the event in its entirety.

The presenters were:

- Greg Elliott, VP of Business Development, 1623 Farnam, LLC

- John Bacon, Cloud Partner Engineer, Google Cloud

- David McDaniel, VP of Cloud Professional Services, Qwinix

- Nick De Cristofaro, Networking Specialist Customer Engineer, Google Cloud

- Kyle Moreta, Solutions Architect, Megaport

Read more about Megaport’s partnership with Google Cloud Partner Interconnect.

Handling the challenges of hybrid cloud

Bacon, Google Cloud: So most organizations have either taken the leap to public cloud or at least have a cloud strategy that they’re exploring, but a lot of workloads still do remain on-prem… This could be for various reasons like proximity to your end-users, compliance, or data locality rules, and so on. So while organizations are building out a cloud strategy, a big component of that is understanding how to handle multicloud or hybrid cloud.

However, some challenges arise as you start to adopt this strategy. Security versus agility…Developers want to push code to production quickly, no matter where the infrastructure is, but the security teams want to ensure the code is safe. The tools used by the developers are going to be verified and trusted, and this sometimes can slow down the development process.

To learn more about running hybrid cloud and multicloud containers with Google Anthos, click here.

Reliability versus cost…When people think of reliability, they think of adding redundant machines, data protection tools, and other services that can increase your costs.

And lastly, portability versus consistency. This is really important when you start looking at multicloud or hybrid cloud. When I start to run modern applications across different environments like on-prem or multiple clouds, I want that application to be portable. I also want it to be a consistent experience. My infrastructure teams want to deploy on-prem or in GCP or even in another cloud without having to make a significant change or adopt new tools.

The benefits of Google Anthos

Bacon, Google Cloud: [Anthos is] a suite of components that helps with things like policy management, cluster management, and so on. Can it be deployed on-prem or other clouds as well? … So we have things like EMFs config management…Anthos GKE and Anthos Service Mesh…we can run this on, on-prem, running on top of a VMware environment or a bare metal environment. We also support running it on top of AWS and Azure, as well as GCP, of course, and then over to the right, we have something…called attached clusters. And what this means is we can actually connect a non-Google Kubernetes cluster into Anthos and provide you a single pane of glass visibility into all those clusters, as well as some additional features…

Now imagine that you have a team at your company that deploys a Kubernetes cluster. So that team has to worry about – how do they enforce policies of that cluster? How do they put security guardrails in place? Which really isn’t that difficult if it’s one team in one cluster. Now imagine that team goes around and they tell everybody else at your company that Kubernetes is amazing and they need to adopt it as well. And so another team starts to use it and then another team. And then they have multiple clusters. Some running for dev, some for prod. And you just end up having tons of teams and tons of clusters out there. And they could be on Google Cloud. They could be on-prem. They could be another cloud’s. So that actually becomes very difficult to manage.

Think of some customers, maybe in retail, like a fast food restaurant, they might run a small Kubernetes cluster in each store to handle things like inventory and point of sale, you know, and they might have 500 or a thousand stores. So in these scenarios, how do I ensure that my security policies I set are enforced? How do I ensure that the IT admin at one of these branch offices or in my data center, doesn’t make a change to a cluster that’s running in one of these stores or in my data center?

Well, the answer is config management. Config management allows you to define and enforce policies across your deployments.

The complexity of typical IT infrastructure

McDaniel, Qwinix: So if you look at your typical IT infrastructure where you’re running a hypervisor, such as VMware or even Citrix Zen, you’re going to run many virtual machines and you’re probably going to have a number of different operating systems, but you’re going to have, you know, several Windows, several Linux, maybe some different varieties of Linux…

Want to learn more about Qwinix, a cloud-native partner engineering partner of Google Cloud?, visit Qwinix’s website.

What Migrate for Anthos really helps with is being able to kind of extract the operating system. And you could have a set of Anthos worker nodes that, for example, run Windows. So you’re extracting every copy of that operating system out of the VM and using a common implementation sitting under Anthos so that all your containers get to utilize that same operating system copy.

So it does reduce your licensing, and it allows you as much as the original virtualization did when we were moving from physical to virtual. It allows you a higher density of application deployment onto your hardware, and that really affects your cost and gives you a much better utilization of your hardware. So the container really is very self-contained. When you build a container, you specify the base operating system and all the different packages or software you need and how to run the container. And that is a very powerful thing when it comes to application development.

On running a data center in the middle of the US

Elliott, 1623 Farnam, LLC: We have 50 carriers and ISPs that have a physical fiber presence within our facility, as well as multiple cloud providers. We also have our internet exchange, so lots of peering opportunities…There’s tons of ways to utilize our facility for a hybrid cloud solution.

Using Partner Interconnect within GCP

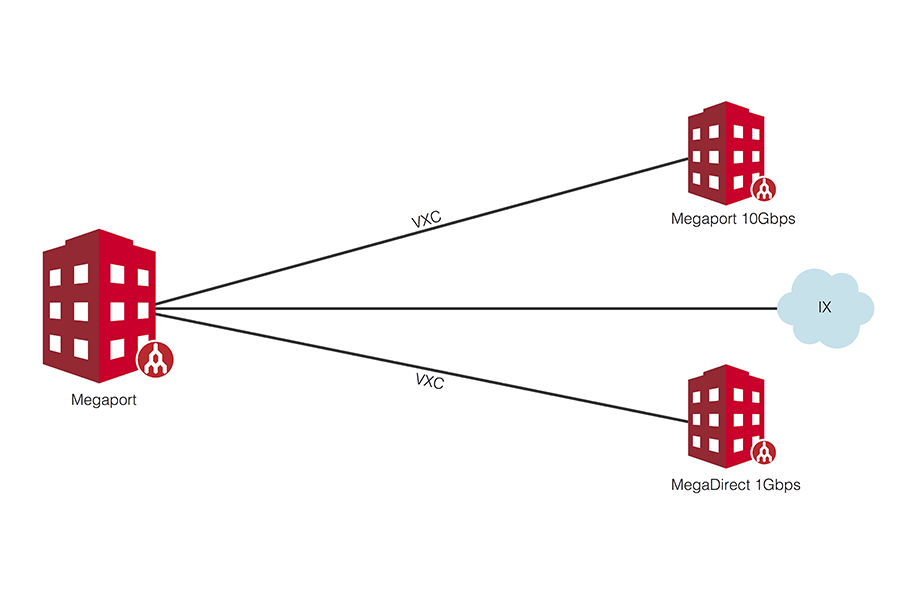

De Cristofaro, Google Cloud: Partner Interconnect within GCP is specifically our way to create a hybrid connection from GCP to an on-prem environment, or it could be to another cloud as well. So in this case…Here, we have the facility at 1623 Farnam, and here, this would be where your workloads are located in the data center…So you may have on-prem workloads already deployed in x86 compute, on-prem routers that are already there. And in this case, if you want to connect to GCP, well, it’s as seamless as making a cross-connect into Megaport…

Effectively Megaport is already, as a partner to us, to Google, they’re already connected to us within GCP. So what that means is you can effectively use their network to connect into GCP.

Download Megaport’s infopaper on Google Cloud Partner Interconnect.

On why private connectivity will save you money and reduce latency

Moreta, Megaport: So really the big benefit of using private connectivity versus just the internet or a VPN is going to be the latency. You are going to get much better latency across the board by using a private connectivity model versus going over the internet or using a VPN. You’re going to have more predictable latency and jitter across there. And you’re also going to see egress savings for the data that you’re pulling down out of the cloud. I think across the board with a VPN or over the internet, egress fees are around $0.08/Gbps, generally speaking across all three. But if you were to pull that data down using an interconnect ExpressRoute or a Direct Connect circuit, those egress fees are going to be pulled down to around $0.02/Gbps. So some good savings on that…

We can help tie in those different cloud workloads and help simplify the network and help right-size the network for what you’re using, kind of modeling it like your cloud workloads. Why should your networking be any different? Your cloud computing resources are elastic and can change and are scalable. So we think your network should be similar to that.

Watch the full webinar