The Over/Under on SD-WAN

- October 27, 2021

- RSS Feed

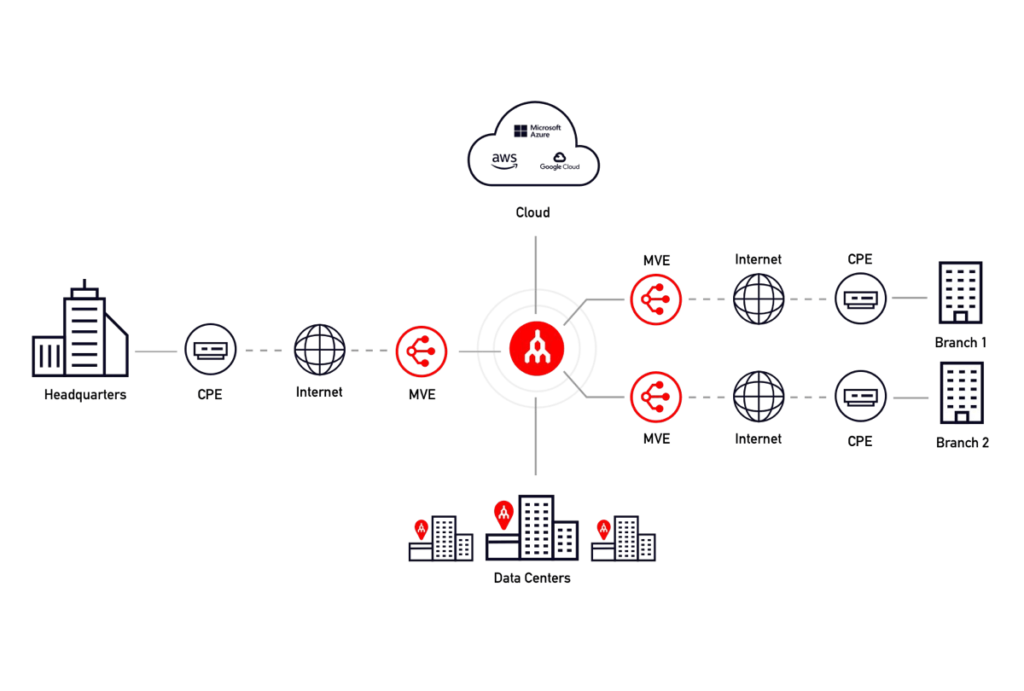

Transform your enterprise WAN with Megaport Virtual Edge (MVE). Enhance your SD-WAN fabric with low-latency, private, software-defined connectivity to critical applications and cloud providers. MVE enables agile, scalable, and cost-effective network solutions, delivering greater control over data flow and faster provisioning for modern business demands.

The concepts and design principles of creating a wide area network (WAN) to provide resilient and optimal transit between endpoints have continuously evolved over the years. But the driver behind building a better WAN has remained the same: to support applications that demand performance and resiliency.

The early days of MPLS were driven by services like VoIP and real-time video collaboration. WAN optimization technologies were soon introduced to maximize throughput using TCP optimization and caching for distributed applications and data replication; then virtualization of compute and storage platforms evolved, resulting in infrastructure moving to more capable data centers outside of the “server room” at the corporate headquarters. Now, our applications are even more widely distributed across IaaS and SaaS platforms requiring even more WAN agility, scalability, and performance. We explain why you should review and optimize your underlay network as your next step to maximizing your WAN – and your SD-WAN.

Read “The Hidden Cost of Running Cloud-Hosted SD-WAN for IaaS.”

The relationship between SD-WAN overlays and underlays

The evolution of SD-WAN in today’s world puts more control in the hands of the business with less reliance on network carriers and service providers. One of the fundamental elements of SD-WAN is to be transport agnostic; whether it’s internet, MPLS, point-to-point, or a 5G segment, the brain of your SD-WAN will continuously interrogate these multi-transport “underlays” to determine the best end-to-end network path. Every SD-WAN vendor has their own secret sauce to make that “best path” decision with an array of application policy and traffic-shaping knobs and levers. These SD-WAN “overlay” functions leverage network protocols such as Bidirectional Forwarding Detection (BFD) and Forward Error Correction (FEC), ensuring that 1) the best WAN link is used and 2) costly retransmissions are reduced in the event of a dropped packet. Lastly, the SD-WAN overlays provide a matrix of traffic shaping and thresholds for custom policy mappings of critical applications to use one transport over the other. However, the most optimally tuned SD-WAN overlay is only as good as the underlay networks available.

These SD-WAN platforms have given us more agility and control over our WAN than ever. But having more control over the transport underlays and middle-mile segments would take the SD-WAN concept to the next level.

We break down the licensing of three major SD-WAN vendors in this blog post.

Designing and optimizing today’s WAN

When designing and optimizing today’s WAN, we typically draw the line at the network carrier or service provider (hand-off and demarc) and simply accept their terms of service, SLAs, and sometimes agonizing provisioning process. Internet transit today is clearly flexible and cost-effective but it’s still unpredictable at times, especially as distances between endpoints and applications increase. MPLS provides more predictability but with higher costs and limited bandwidth flexibility. A dedicated point-to-point circuit will deliver the best performance, but excessive costs will limit deployments to targeted paths and locations, and won’t scale for today’s widely distributed applications, workflows, and data stores. With so many workloads distributed across multiple clouds, how do we get better performance and reach to our users at the edge?

We’ve seen how cloud service providers (CSPs) have advanced the power and scale organizations now have to spin up compute and/or storage in AWS, GCP, and Azure within minutes, providing a true IaaS. The scale up/down cloud compute model also gives businesses the luxury to maximize utilization of compute and storage resources.

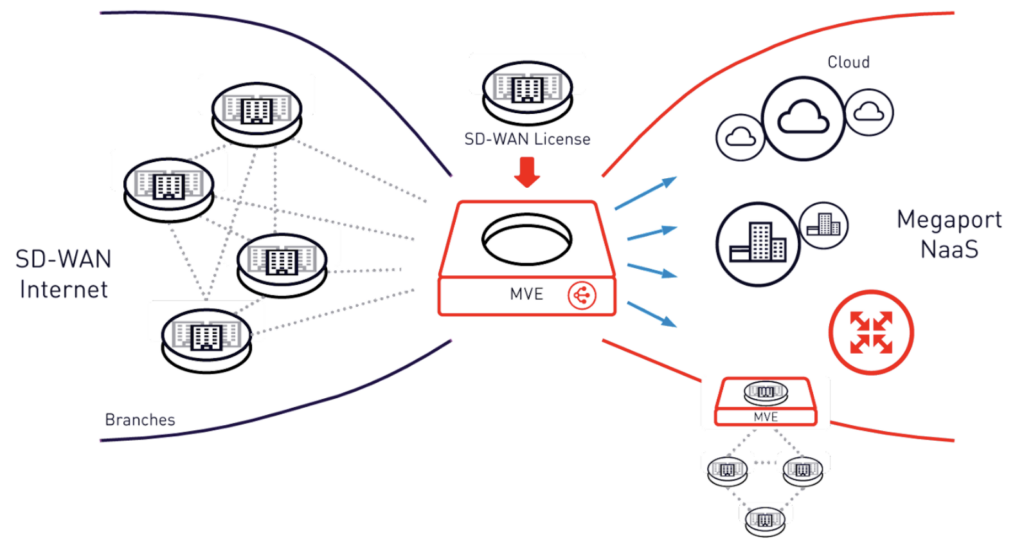

Using Network as a Service

By leveraging a leading Network as a Service (NaaS) provider, you can take advantage of deploying dedicated WAN connections in a similar way. Utilizing Software Defined Cloud Interconnection (SDCI) solutions like Megaport Virtual Edge (MVE) as the on-ramp for your SD-WAN enabled sites will transform your organization’s Infrastructure and Operations capabilities. The Megaport network is a native software-defined global platform built to provision private virtual circuits over a physical network within minutes, anywhere in the world, with access to major cloud destinations.

MVE enhances your existing enterprise SD-WAN platform by giving you the ability to strategically build optimal pathways to critical applications wherever they reside. A few use cases include:

- Direct cloud on-ramp connectivity reducing latency and egress costs.

- A long-haul connection from a branch office which powers users to a colocated private cloud.

- A temporary point-to-point link to a recently acquired business to support application and data migration.

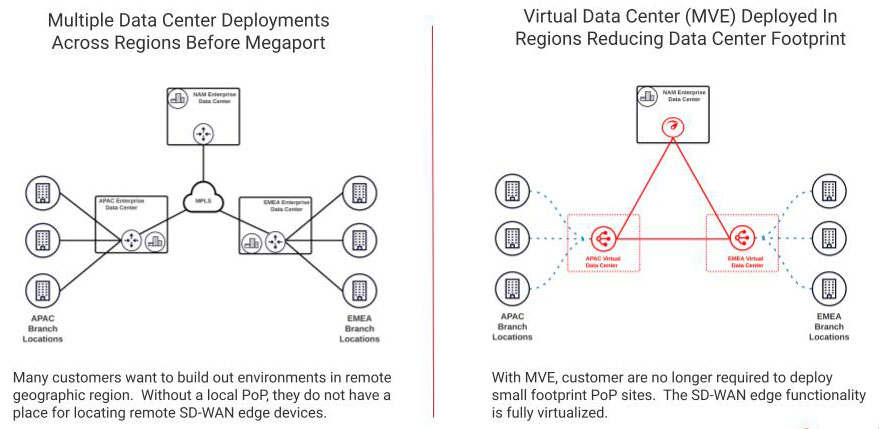

MVE essentially enables businesses to build their own regional PoPs within minutes while deploying a customized middle-mile to extend and optimize their WAN by leveraging Megaport’s global Software Defined Network (SDN). In a way, MVE delivers a hybrid SD-WAN “transport” with the benefits of predictable latency, dynamic provisioning, private layer 2 connectivity, and dedicated bandwidth, making it a preferred solution over MPLS for many customers.

Take a deeper dive into how SD-WAN compares to MPLS in our blog post.

MVE uses internet transit as the first mile for low-cost, low-latency local loop access for branch SD-WAN devices, joining the branch to powerful middle-mile (and in most cases, last-mile) connectivity to CSPs and marketplace/SaaS providers. IT and/or network managers now have the ability to provision these strategic WAN connections in less than 60 seconds (instead of 60 days).

Each MVE metro area has the equivalent of CSP “availability zones,” where two physically diverse data center operators can host your virtual SD-WAN appliance. In addition, MVE also includes diverse internet transit to your virtual SD-WAN appliance with BGP peering to at least two ISPs. For some organizations looking to build this on their own, the provisioning of diverse internet connections and a public ASN may take months. With MVE, all the benefits of a regional network PoP are realized as a service without going through a DIY data center deployment and provisioning process.

The “first mile” from the customer branch SD-WAN appliance to an MVE data center in a metro is the first and only case of internet transport across the WAN, with minimal latency in most cases.

The “middle mile” from the MVE in a metro is a private, layer 2, low-latency connection to anywhere on the Megaport network including:

- Direct cloud on-ramp (AWS,Azure,GCP, and others)

- Another remote regional branch in a metro with an MVE hub

- Private cloud hosted in one of 700+ Megaport enabled data centers

- Megaport marketplace of service providers.

Conclusion

Today’s enterprise networks must embrace digital transformation to quickly adapt and deliver for their customers, and leveraging networking technologies is key. At its core, SD-WAN is all about shaping and steering application traffic across multiple WAN transports. Inserting Megaport Virtual Edge into your enterprise SD-WAN fabric gives your business greater flexibility, control over your data flow, cost optimization, and the agility to adapt to ever-changing business environments.

Stay Updated

Keep up to date on Megaport by following us on social media at:

Twitter: @megaportnetwork

LinkedIn: @megaport

Facebook: @megaportnetworks